| Kenneth W. Church Baidu 1195 Bordeaux Drive Sunnyvale, CA 94089, USA kennethchurch@baidu.com Kenneth.Ward.Church@gmail.com |

|

Abstract: When Minsky and Chomsky were at Harvard in the 1950s, they started out their careers questioning a number of machine learning methods that have since regained popularity. Minsky's Perceptrons was a reaction to neural nets and Chomsky's Syntactic Structures was a reaction to ngram language models. Many of their objections are being ignored and forgotten (perhaps for good reasons, and perhaps not). Future work ought to characterize what deep nets are good for (and what they aren't good for). Can we come up with a theory of generative capacity for deep nets? How much more can we generate with more layers? In practice, deep nets have been effective in vision, speech and machine translation, where (1) we have lots of data, (2) representations and scale don't matter much, and (3) nothing else has been all that effective. Conversely, deep nets are probably less appropriate when representations have been reasonably effective (e.g., symbolic calculus), or for large problems beyond finite-state complexity (e.g., sorting large lists, multiplying large matrices).

Kenneth Church's Biography

Kenneth Church has worked on many topics in computational linguistics including: web search, language modeling, text analysis, spelling correction, word-sense disambiguation, terminology, translation, lexicography, compression, speech (recognition, synthesis & diarization), OCR, as well as applications that go well beyond computational linguistics such as revenue assurance and virtual integration (using screen scraping and web crawling to integrate systems that traditionally don't talk together as well as they could such as billing and customer care). He enjoys working with large corpora such as the Associated Press newswire (1 million words per week) and even larger datasets such as telephone call detail (1-10 billion records per month) and web logs. He earned his undergraduate and graduate degrees from MIT, and has worked at AT&T, Microsoft, Hopkins and IBM. He was the president of ACL in 2012, and SIGDAT (the group that organizes EMNLP) from 1993 until 2011. He became an AT&T Fellow in 2001 and ACL Fellow in 2015.

|

Piek Vossen Vrije Universiteit (VU) Amsterdam 1081 HV Amsterdam The Netherlands piek.vossen@vu.nl www.vossen.info |

Authors: Piek Vossen, Selene Baez, Lenka Bajčetić, and Bram Kraaijeveld

Abstract:

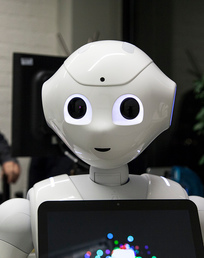

All knowledge is relative and perceptions can be wrong. Our state of mind is based on personal experiences and on what other people tell us. This may result in conflicting information, uncertainty about the truth, and alternative facts that we need to handle when processing information. We present a robot that models this relativity of knowledge and perception within social communication following the principles of the theory of mind. Theory of mind states that children, at some stage of their development (up to 48 months), become fully aware that other people's knowledge, beliefs, and perceptions can be different from theirs. This awareness is the foundation for social interaction and communication, and hence for learning through social communication. In the past, Scassellati designed a humanoid robot following the theory of mind. We take his work as our starting point for implementing these principles in a Pepper robot, in order to drive social communication. The robot uses object recognition, face detection, face recognition, voice detection, speech recognition and speech generation for its interactions. Given these capabilities, we built an interaction model that results in knowledge and information from conversation and perception stored in an external Triple store. The robot, called Leolani, learns directly from what people tell her. For representing the acquired information, we make use of the Grounded Representation and Source Perspective (GRaSP) model that was developed in the NewsReader project. GRaSP allows to store statements, but also the provenance information on the statement in terms of the actual mention of the statement in the conversation, the source of the statement, and the perspective of the source towards the statement, e.g. the emotional (like/dislike/anger/sad/happy), deontic (must/should) and epistemic (belief/denial) state. We demonstrate how the social communication of the robot is driven by hunger to acquire more knowledge from and on people, to resolve uncertainties and conflicts, and to share its awareness of the perceived environment. Likewise, the robot can make reference to the world but also to knowledge about the world and the encounters with people that yielded this knowledge. The robot combines perception and awareness of the here and now with social learning in 1 to 1 communication.

Piek Vossen's Biography

Prof. Dr. Piek Vossen received his PhD (Cum Laude) in 1995 on Computational Lexicology and he is now professor at the Faculty of Humanities of the VU University of Amsterdam. Vossen is an elected member of the Royal Dutch Academy of Science (KNAW) and the Royal Holland Society of Science (KHMW). In the past, he coordinated various European projects, among which EuroWordNet-I-II (LE2 4003 and LE 8328), KYOTO (FP7, ICT-211423) and NewsReader (FP7, 316404). In 2013, he received the prestigious Spinoza-prize for his groundbreaking research on wordnets and NewsReader. He also received the Enlighten-Your-Research prize in 2013 for NewsReader's challenge to process daily streams of millions of news articles. He used the prize to established a research group of 20 researchers that work on the semantic processing of text. His group is now working on the Reference Machine, which is a system that tries to understand events in the world from news and from communication with people. Beside his work in academia, he worked 10 years in Industry as a CTO of start-up companies to develop innovative products using language technology. Vossen is the co-founder and co-president of the Global-Wordnet-Association that organized 9 international conferences since 2002. He is also involved in the national infrastructure projects CLARIN-NL and CLARIAH.

|

Isabel Trancoso INESC-ID Lisboa Spoken Language Systems Lab R. Alves Redol, 9 1000-029 LISBOA, Portugal Isabel.Trancoso@inesc-id.pt |

Abstract:

Speech has the potential to provide a rich bio-marker for health, allowing a non-invasive route to early diagnosis and monitoring of a range of conditions related to human physiology and cognition. With the rise of speech related machine learning applications over the last decade, there has been a growing interest in developing speech based tools that perform non-invasive diagnosis. This talk covers two aspects related to this growing trend. One is the collection of large in-the-wild multimodal datasets in which the speech of the subject is affected by certain medical conditions. Our mining effort has been focused on video blogs (vlogs), and explores audio, video, text and metadata cues, in order to retrieve vlogs that include a single speaker which, at some point, admits that he/she is currently affected by a given disease. The second aspect is patient privacy. In this context, we explore recent developments in cryptography and, in particular in Fully Homomorphic Encryption, to develop an encrypted version of a neural network trained with unencrypted data, in order to produce encrypted predictions of health-related labels. As a proof-of-concept, we have selected three target diseases: Depression, Parkinson s disease, and cold, to show our results and discuss these two aspects.

Conference Photos

Conference Photos